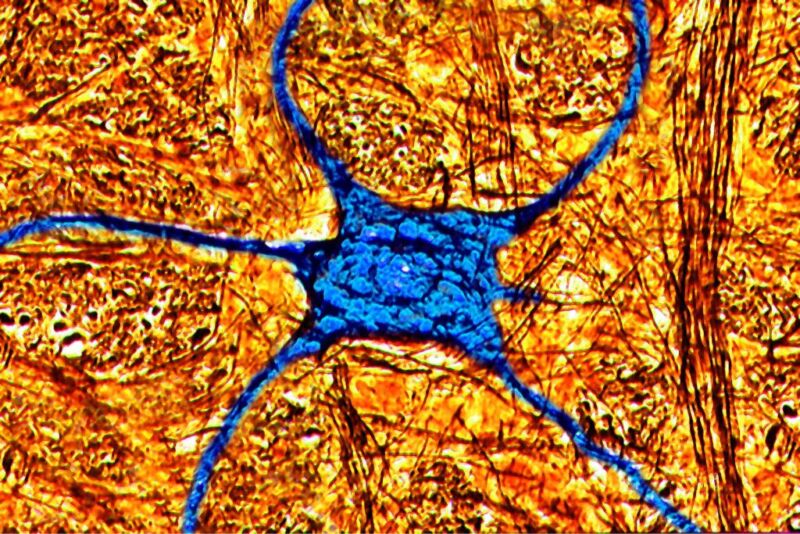

BSIP/Universal Images Group via Getty Images

“Language is a huge field, and we are novices in this. We know a lot about how different areas of the brain are involved in linguistic tasks, but the details are not very clear,” says Mohsen Jamali, a computational neuroscience researcher at Harvard Medical School who led a recent study into the mechanism of human language comprehension.

“What was unique in our work was that we were looking at single neurons. There is a lot of studies like that on animals—studies in electrophysiology, but they are very limited in humans. We had a unique opportunity to access neurons in humans,” Jamali adds.

Probing the brain

Jamali’s experiment involved playing recorded sets of words to patients who, for clinical reasons, had implants that monitored the activity of neurons located in their left prefrontal cortex—the area that’s largely responsible for processing language. “We had data from two types of electrodes: the old-fashioned tungsten microarrays that can pick the activity of a few neurons; and the Neuropixel probes which are the latest development in electrophysiology,” Jamali says. The Neuropixels were first inserted in human patients in 2022 and could record the activity of over a hundred neurons.

“So we were in the operation room and asked the patient to participate. We had a mixture of sentences and words, including gibberish sounds that weren’t actual words but sounded like words. We also had a short story about Elvis,” Jamali explains. He said the goal was to figure out if there was some structure to the neuronal response to language. Gibberish words were used as a control to see if the neurons responded to them in a different way.

“The electrodes we used in the study registered voltage—it was a continuous signal at 30 kHz sampling rate—and the critical part was to dissociate how many neurons we had in each recording channel. We used statistical analysis to separate individual neurons in the signal,” Jamali says. Then, his team synchronized the neuronal activity signals with the recordings played to the patients down to a millisecond and started analyzing the data they gathered.

Putting words in drawers

“First, we translated words in our sets to vectors,” Jamali says. Specifically, his team used the Word2Vec, a technique used in computer science to find relationships between words contained in a large corpus of text. What Word2Vec can do is tell if certain words have something in common—if they are synonyms, for example. “Each word was represented by a vector in a 300-dimensional space. Then we just looked at the distance between those vectors and if the distance was close, we concluded the words belonged in the same category,” Jamali explains.

Then the team used these vectors to identify words that clustered together, which suggested they had something in common (something they later confirmed by examining which words were in a cluster together). They then determined whether specific neurons responded differently to different clusters of words. It turned out they did.

“We ended up with nine clusters. We looked at which words were in those clusters and labeled them,” Jamali says. It turned out that each cluster corresponded to a neat semantic domain. Specialized neurons responded to words referring to animals, while other groups responded to words referring to feelings, activities, names, weather, and so on. “Most of the neurons we registered had one preferred domain. Some had more, like two or three,” Jamali explained.

The mechanics of comprehension

The team also tested if the neurons were triggered by the mere sound of a word or by its meaning. “Apart from the gibberish words, another control we used in the study was homophones,” Jamali says. The idea was to test if the neurons responded differently to the word “sun” and the word “son,” for example.

It turned out that the response changed based on context. When the sentence made it clear the word referred to a star, the sound triggered neurons triggered by weather phenomena. When it was clear that the same sound referred to a person, it triggered neurons responsible for relatives. “We also presented the same words at random without any context and found that it didn’t elicit as strong a response as when the context was available,” Jamali claims.

But the language processing in our brains will need to involve more than just different semantic categories being processed by different groups of neurons.

“There are many unanswered questions in linguistic processing. One of them is how much a structure matters, the syntax. Is it represented by a distributed network, or can we find a subset of neurons that encode structure rather than meaning?” Jamali asked. Another thing his team wants to study is what the neural processing looks like during speech production, in addition to comprehension. “How are those two processes related in terms of brain areas and the way the information is processed,” Jamali adds.

The last thing—and according to Jamali the most challenging thing—is using the Neuropixel probes to see how information is processed across different layers of the brain. “The Neuropixel probe travels through the depths of the cortex, and we can look at the neurons along the electrode and say like, ‘OK, the information from this layer, which is responsible for semantics, goes to this layer, which is responsible for something else.’ We want to learn how much information is processed by each layer. This should be challenging, but it would be interesting to see how different areas of the brain are involved at the same time when presented with linguistic stimuli,” Jamali concludes.

Nature, 2024. DOI: 10.1038/s41586-024-07643-2