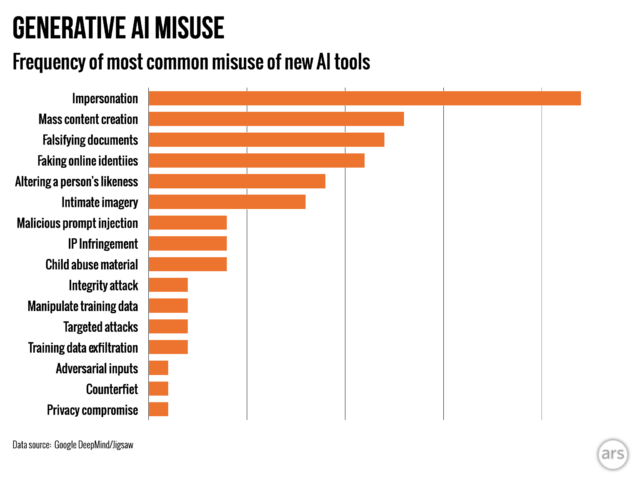

Artificial intelligence-generated “deepfakes” that impersonate politicians and celebrities are far more prevalent than efforts to use AI to assist cyber attacks, according to the first research by Google’s DeepMind division into the most common malicious uses of the cutting-edge technology.

The study said the creation of realistic but fake images, video, and audio of people was almost twice as common as the next highest misuse of generative AI tools: the falsifying of information using text-based tools, such as chatbots, to generate misinformation to post online.

The most common goal of actors misusing generative AI was to shape or influence public opinion, the analysis, conducted with the search group’s research and development unit Jigsaw, found. That accounted for 27 percent of uses, feeding into fears over how deepfakes might influence elections globally this year.

Deepfakes of UK Prime Minister Rishi Sunak, as well as other global leaders, have appeared on TikTok, X, and Instagram in recent months. UK voters go to the polls next week in a general election.

Concern is widespread that, despite social media platforms’ efforts to label or remove such content, audiences may not recognize these as fake, and dissemination of the content could sway voters.

Ardi Janjeva, research associate at The Alan Turing Institute, called “especially pertinent” the paper’s finding that the contamination of publicly accessible information with AI-generated content could “distort our collective understanding of sociopolitical reality.”

Janjeva added: “Even if we are uncertain about the impact that deepfakes have on voting behavior, this distortion may be harder to spot in the immediate term and poses long-term risks to our democracies.”

The study is the first of its kind by DeepMind, Google’s AI unit led by Sir Demis Hassabis, and is an attempt to quantify the risks from the use of generative AI tools, which the world’s biggest technology companies have rushed out to the public in search of huge profits.

As generative products such as OpenAI’s ChatGPT and Google’s Gemini become more widely used, AI companies are beginning to monitor the flood of misinformation and other potentially harmful or unethical content created by their tools.

In May, OpenAI released research revealing operations linked to Russia, China, Iran, and Israel had been using its tools to create and spread disinformation.

“There had been a lot of understandable concern around quite sophisticated cyber attacks facilitated by these tools,” said Nahema Marchal, lead author of the study and researcher at Google DeepMind. “Whereas what we saw were fairly common misuses of GenAI [such as deepfakes that] might go under the radar a little bit more.”

Google DeepMind and Jigsaw’s researchers analyzed around 200 observed incidents of misuse between January 2023 and March 2024, taken from social media platforms X and Reddit, as well as online blogs and media reports of misuse.

Ars Technica

The second most common motivation behind misuse was to make money, whether offering services to create deepfakes, including generating naked depictions of real people, or using generative AI to create swaths of content, such as fake news articles.

The research found that most incidents use easily accessible tools, “requiring minimal technical expertise,” meaning more bad actors can misuse generative AI.

Google DeepMind’s research will influence how it improves its evaluations to test models for safety, and it hopes it will also affect how its competitors and other stakeholders view how “harms are manifesting.”

© 2024 The Financial Times Ltd. All rights reserved. Not to be redistributed, copied, or modified in any way.